"Machine learning is being integrated to efficiently process and simulate large amounts of ALICE data, improving performance while reducing computing cost!"

The ALICE experiment at CERN studies collisions of protons and heavy ions produced by the Large Hadron Collider. At the ALICE interaction point, these collisions occur at extremely high rates - up to around 750,000 proton–proton collisions or 50,000 heavy-ion collisions every second. In head-on heavy-ion collisions, thousands of particles can be produced simultaneously, creating exceptionally high track densities that must be reconstructed in real time.

As a result, the experiment must process enormous amounts of information. The ALICE detector produces data at rates of up to 1 terabyte per second, which are handled by a large online computing system equipped with around 2800 graphics processing units (GPUs). Many of the key reconstruction tasks, such as particle tracking and event reconstruction, are both highly complex and naturally parallelizable, making them particularly well-suited for machine learning approaches. Previously developed algorithms have now been matched or surpassed in their performance by trainable approaches such as neural networks.

Machine learning algorithms are trained on dedicated datasets to recognize specific patterns in the data. During this training process, the parameters of a neural network are gradually adjusted to improve the algorithm’s ability to identify these patterns. It is like learning for a vocabulary test - more repetitions normally achieve better results.

Several problems are tackled this way and range from real-time computing to offline detector calibration and simulation of data. In real-time data processing, the computational constraints are extreme and pose an unavoidable barrier - if an algorithm is too slow (i.e. too many computations to be done) it can’t be used. Therefore, these applications use smaller models and lower numerical precision to reduce the computational cost. They are also optimized for the specific hardware they run on, allowing them to operate efficiently and quickly.

In October 2025, the ALICE Collaboration deployed, for the first time, a neural-network-based algorithm for real-time processing in the experiment. Its purpose is to reconstruct clusters from the raw readout of the Time Projection Chamber (TPC) - small groups of signals generated as charged particles traverse the detector. These clusters form the fundamental input for particle tracking. An intuitive way to understand this process is to think of it as taking a photograph: the raw detector data is the unprocessed image, while the algorithm acts as a smart filter that removes noise and separates overlapping features, producing a cleaner, more interpretable image.

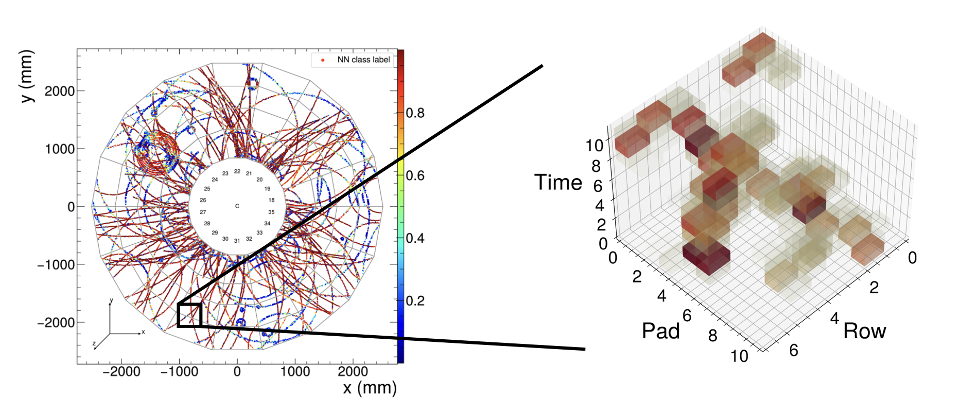

A reconstructed event of particles in the ALICE TPC, with data taken with the neural network cluster finding algorithm. Pink dots are reconstructed clusters.

While this might seem like an easy task at first if there are few such tracks, it gets increasingly challenging the more overlap they might have among each other. As an analogy, it’s as if a car passes in front of a scene and you must distinguish which pixels in the image belong to the car and which to the background. And now there are multiple cars of different colors, textures, and shapes that, in addition, overlap. In the context of particle physics, beyond simply reconstructing clusters, the neural network assigns to each of them a probability that it originates either from the background or from a real particle traversing the detector. The figure below illustrates the neural network in action, classifying clusters in the TPC.

Left: Simulated particle clusters in the TPC detector. The colors (NN class labels) indicate the model’s output for each cluster: values closer to 1 (red) correspond to a higher likelihood that a cluster will be retained for further processing. Right: Overlapping particle clusters within a localized region of the detector, shown as a function of time.

Once particle tracks are created, the final measurement quantities need to be corrected for detection effects and other unforeseen phenomena. To return to the camera analogy, it’s like correcting for known camera effects such as image blurring or horizon leveling. This procedure is not done in real time. Detector effects, such as distortion and background contributions, are evaluated and corrected by employing realistic simulations. Modern simulation tools are used to generate high-energy particle collisions and the subsequent transport of the resulting particles through the detector. As for experimental data handling, the full simulation process is highly computationally intensive, significantly affecting resource requirements.

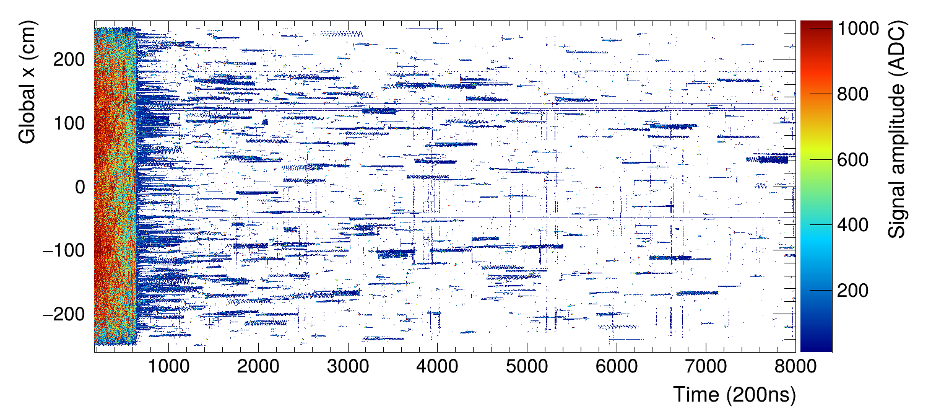

One well-known source of background arises from slow neutrons interacting with the TPC. These interactions produce secondary particles, such as electrons, which generate large spurious signals in the detector. They contribute to the clusters reconstructed in experimental data, as shown in the figure below, after the bulk of the collision (time > 1000), so they must be modeled accurately. Unfortunately, simulating the transport of neutrons down to low kinetic energies, where the capture cross section becomes significant, is particularly computationally expensive, increasing the overall computing time by roughly an order of magnitude.

TPC clusters reconstructed from data as a function of time, highlighting the signal amplitude.

To address this, a generative neural network is used to directly simulate the final products of these interactions, bypassing the need to simulate the full process. The computational cost of this approach is essentially negligible, making previously infeasible simulations possible within the available computing budget.

These developments demonstrate that machine learning is becoming an integral component of ALICE in data processing, reconstruction, and simulation. As data rates and detector complexity continue to grow, it will play an increasingly important role across all these areas.